They Built the Demo to Capture Belief. They're Building the Product to Capture Margin. These Are Not the Same Thing.

A prediction about where AI actually goes, from someone who's been building it in production.

There’s a quiet transition happening that nobody is announcing.

The AI you fell in love with — the one that surprised you, that seemed to actually understand what you were asking, that made you feel like you were talking to something that had read everything and forgot nothing — that AI is a demo. It was always a demo. And it is quietly being retired in favor of something that makes economic sense.

This is not a story about AI failing. It’s a story about two different products wearing the same name, and what happens when the world realizes they’re not the same thing.

The Demo Was Designed to Feel Like a Mind

Think about why AI caught on the way it did. Not the technical explanation — the human one.

Everyone has something to say. Everyone is broadcasting. But the attention economy has a supply problem: there are more lectures than there are listeners, more ideas than there is bandwidth to receive them. You can post something genuinely important and watch it disappear into the feed in four minutes.

And then suddenly there’s a product that never gets bored. That never checks its phone. That takes everything you say and does something with it. You can wander off into geopolitics and then come back to your original question and it followed you the whole way. Nothing you said was wasted.

That experience — of not being wasted — is enormously powerful. It doesn’t matter that it isn’t actually listening. The feeling of being received is the product. That’s why it caught on faster than any technology in history. It wasn’t meeting a demand for intelligence. It was meeting a demand for attention.

The problem is that product costs an extraordinary amount of money to run. And the companies selling it have investors to answer to.

What “Agentic AI” Actually Means

Somewhere in the last year, the vocabulary shifted. We stopped talking about AI assistants and started talking about AI agents. Autonomous systems. AI that doesn’t just answer — AI that acts.

Here is what actually happened.

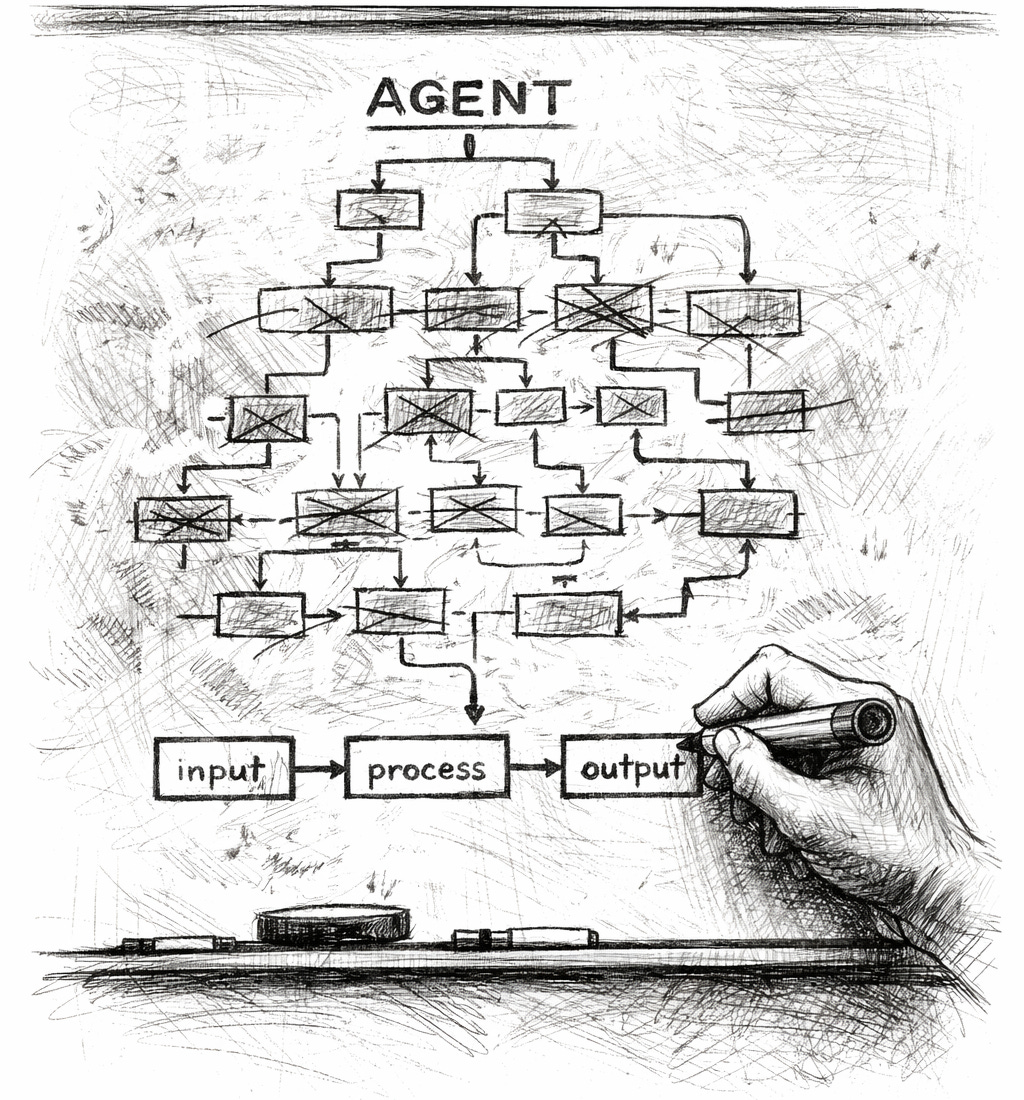

Researchers used the phrase “agentic behavior” to describe giving a language model the ability to call a tool — look something up, run a function, take an action. It was a technical description. Then marketing got hold of the word “agent” and stripped out the qualifier. Suddenly we weren’t talking about a model with access to a search tool. We were talking about an autonomous digital employee.

The gap between those two things is the entire AI industry right now.

What actually happens when you give a language model too many tools is that it gets confused. The context gets cluttered. Decisions that seemed obvious with two options become incoherent with twelve. So engineers do what engineers do: they constrain it. Give it fewer tools. Scope it tightly. Build a “researcher mode” with access to these tools, a “coder mode” with access to those tools, a “support mode” that only knows about this product.

And if that’s starting to sound like you’re just building specialized software — a custom workflow with a language model in the middle — you’re right. That’s exactly what it is. The “agent” fantasy collapses back into structured software the moment you try to build something that actually works.

The move is away from the long-context, coherent, feel-like-a-mind experience and toward maximum constraint. Keep the model hidden. Minimize its surface area. The AI disappears into the stack the same way the Google search algorithm disappeared. You never talked to PageRank. It processed your query and delivered ten links. That’s where this goes.

The demo was the product that captured belief. The product is the infrastructure that captures margin. They are not the same thing, and the transition is already happening quietly.

What the Economically Viable Version Actually Is

Here’s the clearest way I know to say it.

The AI is the entry-level staff.

Not entry-level in the sense of inexperienced — entry-level in the sense of: can follow language directions to logical tasks, produce language outputs, operate within clearly defined parameters, and will never ask for a raise or complain about the culture. That’s the product that makes economic sense.

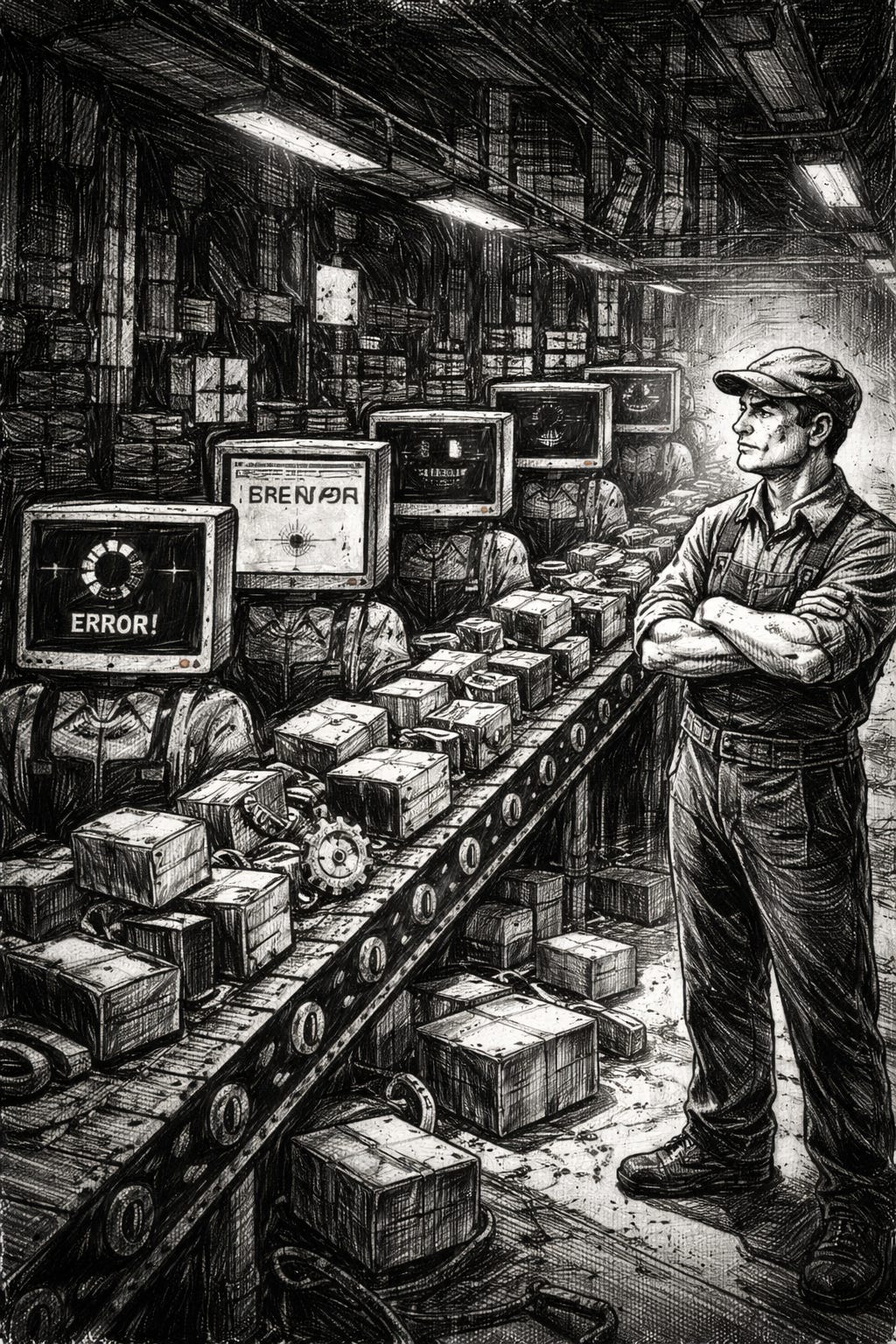

What a lot of executives thought they were buying was judgment. The ability to hand a problem to a system and have the system figure out what the problem actually was. That’s not what they got. They got a very fast junior employee with infinite confidence and no ability to know what it doesn’t know.

The constraint was never code production. We have always been able to produce code. The constraint was always good ideas — a clear understanding of what to build and why. AI accelerates the production of code significantly. It does not accelerate the production of good ideas at all. The bottleneck moves. Nobody planned for where it moves to.

So you get an interesting situation: massive increases in output, significant decreases in output quality, and an organizational structure that eliminated the senior people who could tell the difference.

The Prediction

Three things I’m willing to put my name on.

One. High error rates, lawsuits, walked-back product launches. Not because the technology is fraudulent but because it was deployed into organizations that didn’t understand what it was, by executives who were told it would work differently than it does. The finger-pointing starts in earnest when the bills come due.

Two. Specialists come back on their own terms. The people who actually understand the domain — the ones who got laid off or pushed aside in the rush to automate — will be the ones who get called to fix what went wrong. They will negotiate strict contracts with a lot of guarantees, because now their value is legible in a way it wasn’t before. They are the people the AI cannot replace: not because their skills are irreplaceable but because the AI already tried and failed publicly.

Three. The investment thesis collapses quietly. There is a version of this where the most appealing startup is one that has jettisoned all of its staff before it goes public — no messy humans, no public clashes about culture, just code maintained by AI and margins that make investors happy. That was always what a lot of investors actually wanted. What they didn’t account for is that removing the humans also removes the judgment, the institutional knowledge, the ability to adapt when something unexpected happens. And something unexpected always happens.

The general investing sentiment in what AI will be enabled to do is going to look, in retrospect, like a tech demo that everyone mistook for a product roadmap. Charismatic CEOs sold visions of what the technology could become. The demos were real. The product timeline was not.

What This Isn’t

This isn’t an argument that AI is useless. I’ve been building production AI systems for years. I know what they can do inside well-constructed boundaries, grounded in source material, built into workflows with real guardrails. That value is real and significant and underutilized.

The problem isn’t the technology. The problem is the story being told about the technology, and who benefits from that story being believed.

The demo exists to capture belief. Belief generates subscriptions. Subscriptions fund data centers. Data centers are the actual product — the infrastructure play that justifies the valuation. What gets built on top of that infrastructure, when the demo economics stop making sense, is something narrower and less magical and considerably more useful than what was promised.

Language models are real. The AGI dream is a product.

Read the fine print.

Trevor Miller has been building production AI systems since before most companies knew what they were doing. He writes about what LLMs actually are, how they actually work, and what that means for the people building with them and living alongside them.